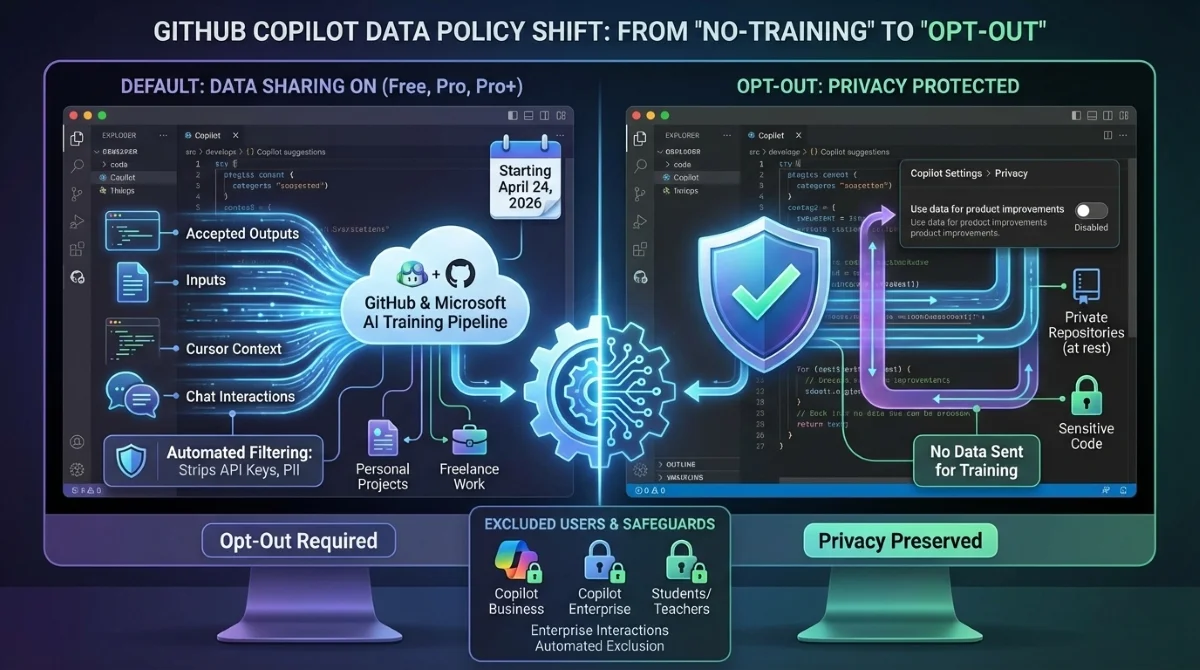

GitHub is fundamentally changing how it handles developer data, announcing that starting April 24, 2026, it will use interaction data from Copilot Free, Pro, and Pro+ users to train its AI models. This approach aligns with established industry practices, but it shifts the platform from a strict no-training stance to an opt-out model for individual developers. Copilot Business and Copilot Enterprise users remain completely exempt from this update.

For the 26 million developers relying on the AI assistant, this means your active code snippets, prompts, and chat interactions will be fed back into the system by default. If you are working on proprietary personal projects or sensitive freelance work, you must take immediate action to protect your codebase. GitHub argues this shift is necessary to build smarter, context-aware models that can better understand complex development workflows.

After successfully testing this approach using internal Microsoft employee data, the company claims real-world interaction data significantly improves the AI's ability to catch bugs and suggest accurate patterns. The company maintains that the future of AI-assisted development depends on real-world interaction data, moving beyond the initial models built solely on publicly available data and hand-crafted samples.

What Data GitHub is Collecting (and What is Safe)

The new policy casts a wide net over active coding sessions. However, GitHub explicitly states it will not pull code from private repositories "at rest," meaning it only captures what you actively send to the model during a live session.

- Collected Data: Accepted or modified outputs, inputs sent to Copilot, cursor context, comments, repository structure, and chat interactions.

- Excluded Users: Copilot Business, Copilot Enterprise, Copilot Student, and teachers with free Copilot Pro access.

- Enterprise Safeguard: If a personal account collaborates on a paid organization's repository, that interaction data is automatically excluded from training.

To address security concerns, GitHub has implemented automated filtering designed to strip out sensitive information like API keys, passwords, and personally identifiable information before the data reaches the training pipeline. The data will only be shared with GitHub affiliates, primarily Microsoft, and will not be sold to third-party AI providers.

How to Opt Out of Copilot Data Collection

If you prefer to keep your coding habits private, GitHub allows you to opt out of this data collection entirely. If you previously disabled prompt and suggestion collection, GitHub will honor that existing preference automatically.

- Navigate to your GitHub account settings.

- Click on the Copilot section in the sidebar.

- Go to the Privacy tab.

- Disable the setting that allows GitHub to use your data for product improvements.

The "My Take" on GitHub's Data Strategy

This policy update highlights a critical pivot in the AI arms race: the transition from scraping public data to harvesting active user interactions. By making this an opt-out policy rather than opt-in, GitHub is leveraging the inertia of its massive user base to secure a high-quality, real-world training dataset. The fact that Microsoft employee data already yielded "meaningful improvements" proves that synthetic data is no longer enough to maintain a competitive edge.

While GitHub justifies this by pointing to similar moves by Anthropic and JetBrains, the decision to exclude Enterprise customers while defaulting individual Pro users to data sharing creates a distinct privacy tiering system. Developers must now treat their code editor as an active participant in AI training, making the opt-out toggle a mandatory first step for anyone handling sensitive, non-enterprise code.