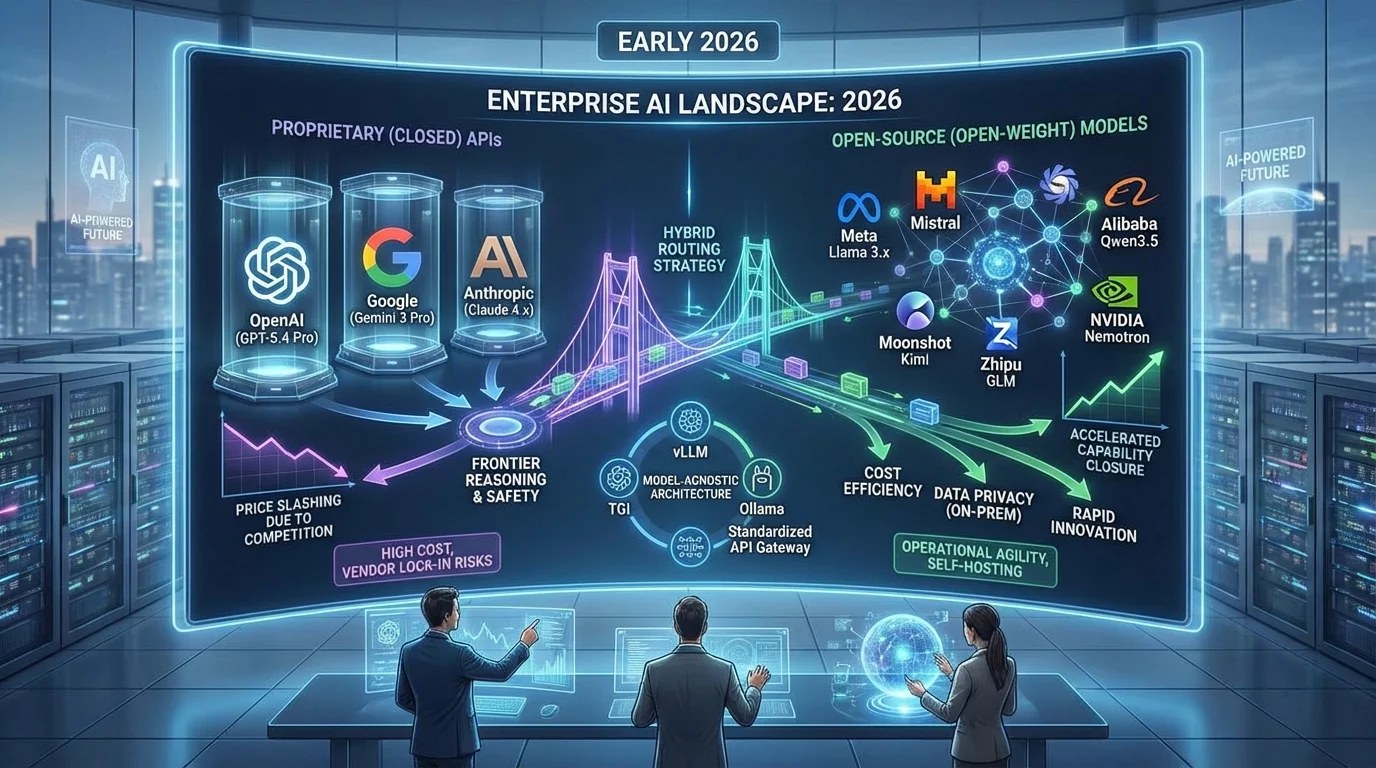

The days of defaulting to OpenAI or Google for enterprise artificial intelligence are officially over. As of early 2026, the capability gap between proprietary application programming interfaces (APIs) and open-weight models has closed dramatically, fundamentally altering the economics of machine learning. Organizations are no longer forced to choose between exorbitant API costs and subpar performance.

This comprehensive analysis is designed for enterprise leaders, software developers, and AI architects who must navigate the complex "build versus buy" dilemma. By understanding the latest benchmarks, pricing structures, and deployment frameworks, decision-makers can optimize their infrastructure budgets, ensure strict data privacy, and avoid long-term vendor lock-in. The strategic choices made today will dictate an organization's operational agility for years to come.

The rapid convergence of AI capabilities is reshaping market dynamics. Competitive pressure from open-source communities has forced proprietary providers to continuously slash API pricing, driving a structural shift in how digital businesses operate. Maintaining the ability to switch between open and closed ecosystems is now a mandatory architectural requirement.

The Current Landscape: Closed vs. Open Leaders

The proprietary AI sector remains dominated by three major players, each offering distinct advantages. OpenAI continues to set the frontier benchmark with its ChatGPT, o1, and the newly tracked GPT-5.4 Pro models, offering unparalleled reasoning and commercial polish. However, this ecosystem demands premium pricing and locks users into proprietary infrastructure.

Anthropic maintains a strong position with its Claude 3 and 4.x families, specifically leading in extended context windows, coding tasks, and rigorous safety guardrails. Google rounds out the top tier with its Gemini 3 Flash and Pro previews, leveraging deep multimodal strengths and seamless integration within the Google Workspace ecosystem.

Conversely, the open-source movement has accelerated beyond industry expectations. Meta's Llama 3.x family serves as the flagship open model, scaling from 8B to massive 405B parameter versions, with Llama 3.2 introducing crucial multimodal capabilities. Mistral, the European AI laboratory, continues to deliver exceptional efficiency-to-capability ratios, particularly in coding performance.

Meanwhile, Alibaba's Qwen series, notably the Qwen3.5 and Qwen3 VL models, has emerged as a dominant force. Often overlooked in Western markets, Qwen consistently matches or beats Llama on global benchmarks, especially in multilingual and vision-based applications.

Head-to-Head Performance and Pricing Data

Tracking across 300 distinct AI models reveals a stark contrast in how the two ecosystems operate. While proprietary models maintain a slight edge in absolute peak performance, open-source alternatives offer overwhelming advantages in cost and accessibility.

| Metric | Open Source | Proprietary |

|---|---|---|

| Total Models Tracked | 145 | 155 |

| Average Score | 67.6 | 72.1 |

| Best Model Score | 85.0 (Qwen3.5-9B) | 94.0 (GPT-5.4 Pro) |

| Average Cost (per 1M tokens) | $0.562 | $9.69 |

| Free Models Available | 21 | 3 |

| Average Context Window | 149K tokens | 425K tokens |

The data highlights a massive financial disparity. The average proprietary model costs nearly 17 times more per million tokens than its open-source counterpart. However, proprietary models offer significantly larger context windows, averaging 425K tokens compared to 149K for open models, making them superior for massive document analysis.

Use-Case Decision Matrix: Which Model for Which Task?

The right model depends entirely on the nature of the task. The following matrix maps common enterprise workloads to their optimal deployment strategy based on the 2026 capability and pricing landscape.

| Use Case | Recommended Model Type | Why |

|---|---|---|

| High-volume summarization & extraction | Open Source (self-hosted Llama 3 or Qwen) | Repetitive tasks at scale make API costs prohibitive; open models handle this at ~$0.56/1M tokens |

| Complex multi-step reasoning | Proprietary (GPT-5.4 Pro or o3) | Closed models still hold a frontier advantage in logical chain-of-thought tasks |

| Legal & financial document analysis | Proprietary (Claude Opus 4.6) | 1M token context window handles entire contracts; enterprise safety guardrails required |

| AI-powered coding assistant | Open Source (Mistral or Qwen3.5) | Strong code benchmarks at a fraction of the cost; can be fine-tuned on internal codebases |

| Customer support chatbot | Open Source (fine-tuned Llama 3) | Fine-tuning on company-specific data outperforms generic closed models; full data privacy |

| Healthcare / defense data processing | Open Source (on-premises deployment) | HIPAA/GDPR compliance requires data never leaves internal infrastructure |

| Multimodal image + text workflows | Proprietary (Gemini 3 Pro or GPT-5.4) | 60.6% of proprietary models support vision vs. 27.6% of open models |

| Low-volume prototyping & R&D | Proprietary API (pay-per-use) | Zero infrastructure overhead makes closed APIs cheaper at under 1B tokens/month |

Top AI Models of 2026: The Definitive Roundup

The performance ceiling is currently set by proprietary models, but open-source alternatives dominate the efficiency and value metrics. Based on the latest 2026 evaluations, the landscape is highly competitive.

- Top Proprietary Models: OpenAI's GPT-5.4 Pro leads the pack with a score of 94, though it costs a premium $30.00 input / $180.00 output per 1M tokens and features a 1.1M context window. It is closely followed by the standard GPT-5.4, GPT-5.4 Thinking, and Anthropic's Claude Opus 4.6 (score 92, $5.00 input / $25.00 output/1M). Other notable contenders include OpenAI's o3 Deep Research, Google's Gemini 3 Flash Preview, and xAI's Grok 4.1 Fast, which offers a massive 2M context window for just $0.20 input / $0.50 output/1M.

- Top Open Source Models: Alibaba's Qwen3.5-9B ties for the top open-source spot with a score of 85, costing merely $0.100/1M. It shares this tier with Moonshot AI's Kimi K2.5, Zhipu AI's GLM 4.6V, and Alibaba's Qwen3 VL 8B Thinking. NVIDIA's Nemotron 3 Super and MiniMax M2.5 also rank highly, notably offering free inference tiers.

- Edge and Quantized Models: For highly constrained environments, models like Microsoft's Phi-2 demonstrate the power of quantization. Testing shows that the Phi-2.Q5_K_M (5-bit) configuration achieves a 70% exact match and 87% ROUGE-L score, marginally outperforming its 6-bit and 8-bit counterparts by preserving finer granularity.

The Capability Gap: Where Proprietary Still Wins

Despite the rapid rise of open weights, proprietary models retain distinct advantages in specific, high-complexity domains. Frontier reasoning remains the strongest moat for closed ecosystems. OpenAI's o3 model classes execute complex, multi-step logical reasoning that open source has not fully replicated, although models like DeepSeek R1 have narrowed this gap.

Safety tuning and alignment also heavily favor closed providers. Companies like Anthropic invest massive resources into Reinforcement Learning from Human Feedback (RLHF), ensuring enterprise-grade guardrails. Open models, by their nature, vary wildly in their safety properties and require internal teams to implement their own filtering mechanisms.

Finally, while open models like Llama 3.2 and Qwen3 VL have introduced vision capabilities, proprietary models still lead in multimodal integration. Currently, 60.6% of proprietary models support vision tasks, compared to just 27.6% of open-source models. Function calling and JSON mode reliability also remain stronger in the closed ecosystem.

The Open-Source Advantage: Fine-Tuning on Proprietary Data

One of the most underappreciated advantages of open-weight models is the ability to fine-tune them on an organization's proprietary internal data. Unlike closed APIs, which offer no access to model weights, open models can be retrained on domain-specific datasets using frameworks such as Hugging Face PEFT (Parameter-Efficient Fine-Tuning) and Unsloth, often requiring only a single consumer-grade GPU.

This process produces a specialized model that outperforms a generic closed-source alternative in the target domain. A legal firm fine-tuning Llama 3 on its contract archive, or a healthcare provider adapting Mistral on clinical notes, can achieve superior accuracy at a fraction of the cost of deploying a premium proprietary API. Furthermore, the resulting fine-tuned model weights remain entirely within the organization's infrastructure, ensuring full data sovereignty.

Hugging Face serves as the central hub for this ecosystem, hosting over 900,000 open models, datasets, and fine-tuning tools. It is the primary platform used by researchers and engineers globally to discover, evaluate, and deploy open-weight models, making it an essential resource for any organization evaluating open-source AI.

The Real Costs of Open Source: Challenges Organizations Must Not Ignore

Despite their compelling economics, open-weight models introduce operational complexities that organizations must honestly assess before committing. The true total cost of ownership (TCO) extends well beyond the per-token price and must account for the following factors.

- MLOps Overhead: Self-hosting a 70B+ parameter model requires dedicated machine learning operations (MLOps) expertise. Provisioning GPU clusters, managing model versioning, monitoring inference performance, and handling hardware failures are ongoing engineering responsibilities that proprietary API providers abstract away entirely.

- Security and Update Management: Closed providers continuously patch their models against newly discovered vulnerabilities and update safety guardrails. With open-weight models, the organization assumes full responsibility for monitoring CVEs, applying updates, and implementing its own content filtering layers.

- Variable Safety Properties: Open models vary significantly in their alignment and refusal behavior. Without a dedicated red-teaming and evaluation process, deploying an unvetted open model into a customer-facing product carries measurable reputational and legal risk.

- GPU Infrastructure Costs: Running a 70B model in production requires at minimum two NVIDIA A100 80GB GPUs. At AWS on-demand pricing, this represents approximately $12$18 per hour, meaning infrastructure must run at high utilization to justify the economics over a managed API.

These challenges do not negate the open-source value proposition; they contextualize it. For organizations with existing MLOps teams and high inference volumes, the economics strongly favor open models. For smaller teams without dedicated ML infrastructure, a managed API remains the more pragmatic choice.

Actionable Guide: How to Choose and Deploy Your AI Model

Selecting the right AI infrastructure requires balancing privacy, volume, and technical capacity. Follow these chronological steps to architect a resilient AI strategy.

- Assess Your Data Privacy Requirements: If your application handles highly sensitive healthcare, financial, or defense data, you must default to open-source models deployed on-premises. If data can safely leave your servers, closed APIs are viable.

For organizations operating under GDPR (General Data Protection Regulation) in the European Union, or HIPAA (Health Insurance Portability and Accountability Act) in the United States, the use of third-party cloud APIs introduces significant compliance risk. Under GDPR Article 28, any processor of personal data must be contractually bound and cannot transfer data outside the EU without adequate safeguards. HIPAA similarly prohibits transmitting Protected Health Information (PHI) to vendors without a signed Business Associate Agreement (BAA). While major providers like OpenAI and Anthropic offer enterprise BAA agreements, self-hosting an open-weight model on on-premises infrastructure eliminates this third-party dependency entirely and provides the strongest possible compliance posture. - Calculate the Token Math: Estimate your monthly inference volume. For low-volume prototyping (e.g., 1 million tokens per month), closed APIs are cheaper due to zero infrastructure costs. For high-volume production (e.g., 1 billion tokens), renting dedicated GPUs for open-source models yields dramatic savings.

- Select Your Deployment Method: For local development, utilize tools like Ollama or LM Studio to run quantized models on standard hardware. For production self-hosting, deploy via vLLM or Text Generation Inference (TGI) on cloud GPUs.

- Leverage No-Code Platforms: If your team lacks DevOps expertise, using an API gateway or visual builder allows you to connect to closed APIs (OpenAI, Anthropic) or managed open-source deployments through a unified visual interface, enabling rapid prompt management and cost control without writing code.

- Implement a Hybrid Routing Strategy: Do not rely on a single provider. Route complex reasoning tasks to GPT-5.4 or Claude Opus, while directing high-volume, repetitive tasks (like summarization or extraction) to a locally hosted Llama 3 or Qwen model.

My Take: The Commoditization of Core Intelligence

The data from 2026 paints a clear picture: raw artificial intelligence is rapidly becoming a commodity. When an open-source model like Qwen3.5-9B can achieve a benchmark score of 85 for just $0.100 per million tokens, the justification for paying $180.00 per million tokens for GPT-5.4 Pro (score 94) becomes incredibly narrow. That premium is only viable for the absolute bleeding edge of complex reasoning or massive 1.1M context window requirements. For 90% of enterprise use cases, the open-source baseline is now more than sufficient.

This dynamic shifts the balance of power away from model providers and directly into the hands of developers and businesses. The true value in the AI ecosystem is no longer in possessing the smartest foundational model, but in the proprietary data used for fine-tuning and the specific application workflows built around it. Companies that architect their systems to be model-agnostic - using standardized API gateways - will be the ultimate winners.

Looking ahead, we will see a massive proliferation of hyper-specialized, fine-tuned open models dominating specific industries, while closed models will serve as general-purpose orchestrators. The strategic imperative for 2026 is clear: prototype with the convenience of closed APIs, but build your long-term infrastructure on the economics and sovereignty of open source.