Breakthrough in 2D Material Integration for Brain-Like Vision

Scientists have developed a homogeneous integration of two-dimensional (2D) material-based optoelectronic neurons and ferroelectric synapses, enabling in-sensor spiking neural networks (SNNs) for dynamic vision processing with high energy efficiency and rapid speeds. This advance addresses the need for edge computing in vision systems, where traditional architectures struggle with power demands.

Why This Matters

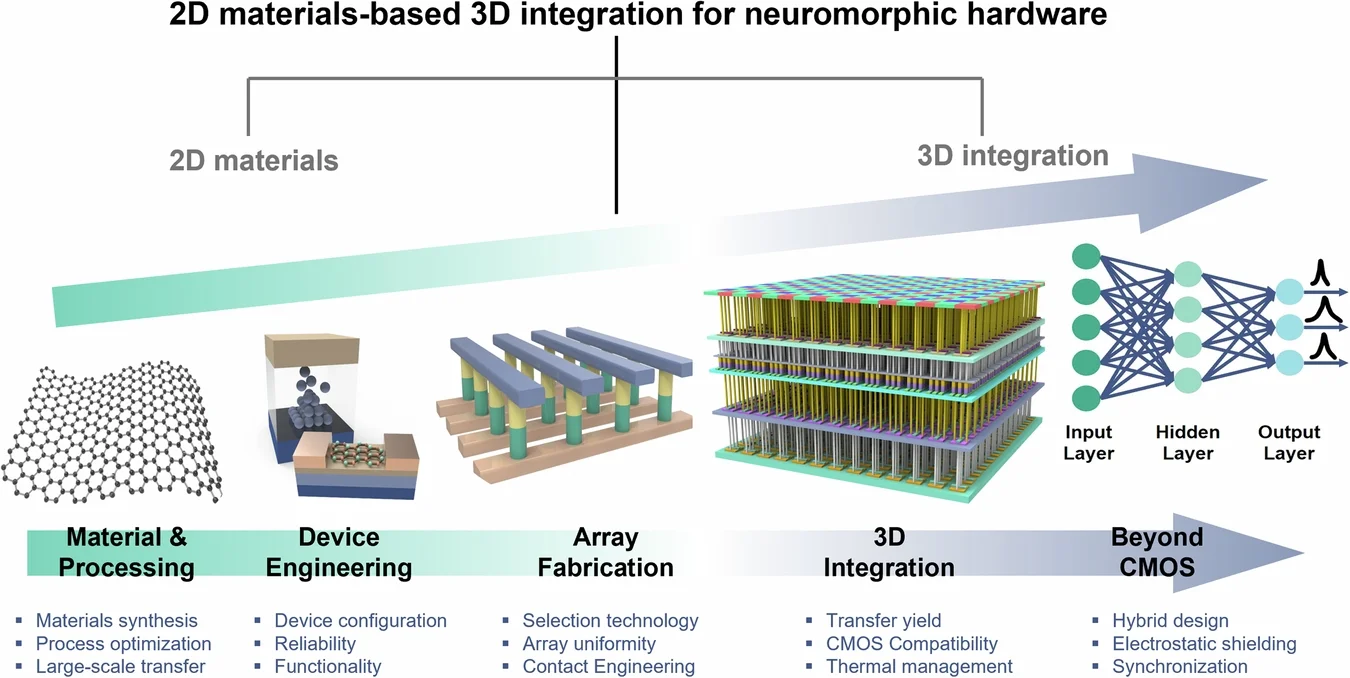

This innovation is crucial for energy-constrained environments like wearables and autonomous devices, where processing visual data on-device reduces latency and bandwidth. By mimicking biological neural processes, these 2D structures offer superior efficiency over silicon-based systems, potentially slashing power use in AI vision tasks. For engineers and researchers, it opens pathways to scalable neuromorphic hardware that operates on ambient light, minimizing reliance on power-hungry lasers.

Technical Deep-Dive: How It Works

The system leverages atomically thin 2D materials like MoS2, WS2, and h-BN for optoelectronic random-access memories (ORAMs) and synaptic devices. Optoelectronic neurons combine transparent phototransistors with modulators, achieving nonlinear self-amplitude modulation of incoherent broadband light with just 20% photon loss and low thresholds of 56 μW/cm². Ferroelectric synapses provide nonvolatile storage with multibit capabilities, high ON/OFF ratios, and endurance, driven by charge trapping and filament formation mechanisms.

- Broadband Response: Covers visible wavelengths, ideal for real-world lighting.

- Low Power: Energy per activation as low as 69 fJ, far below conventional systems.

- Integration: Stacked heterostructures enable separate optical feedforward and electronic feedback paths for winner-takes-all networks.

Compared to oxide or perovskite alternatives, 2D ORAMs excel in responsivity, retention, and efficiency, though programming voltages need further reduction.

Realistic Scenario: Enhancing Everyday Devices

Imagine a smartphone camera using this 10,000-pixel neuron array to instantly reduce glare from headlights during night drives, preserving details of pedestrians while blocking blinding lights. Integrated into cellphone imaging, it processes incoherent ambient light on-sensor, enabling safer autonomous features without cloud dependencya boon for human drivers relying on clear vision in adverse conditions.

Forward-Looking Implications

Future cascades of these arrays with diffractive processors could build full nonlinear optical networks for computational imaging, extending to security and machine vision. As wafer-scale fabrication matures, 2D neuromorphic systems may power next-gen AI, solving unsupervised clustering and optimization with biological fidelity, ultimately transforming edge AI from power-hungry to sustainable. Challenges remain in scaling and voltage optimization, but the roadmap points to viable brain-inspired hardware.

Historically, 2D materials progressed from isolated properties in MoS2 and black phosphorus to integrated ORAMs, with recent stacks enabling memristive neurons. Competitors like ORNL's twisted layers hint at tunable thermal properties for even better efficiency. This positions 2D tech as a frontrunner in energy-efficient computing.