Grammarly's new Expert Review AI feature is generating writing suggestions by mimicking the identities of prominent tech journalists and authors without their permission. The tool, recently launched by Grammarly's parent company Superhuman, claims to offer industry-relevant perspectives but has sparked significant privacy and ethical concerns regarding the unauthorized use of professional reputations.

For writers, editors, and digital professionals relying on AI assistants, this development highlights a critical intersection of data privacy and AI ethics. Understanding how these tools source and attribute their AI-generated feedback is essential for maintaining editorial integrity and avoiding misleading advice in professional workflows.

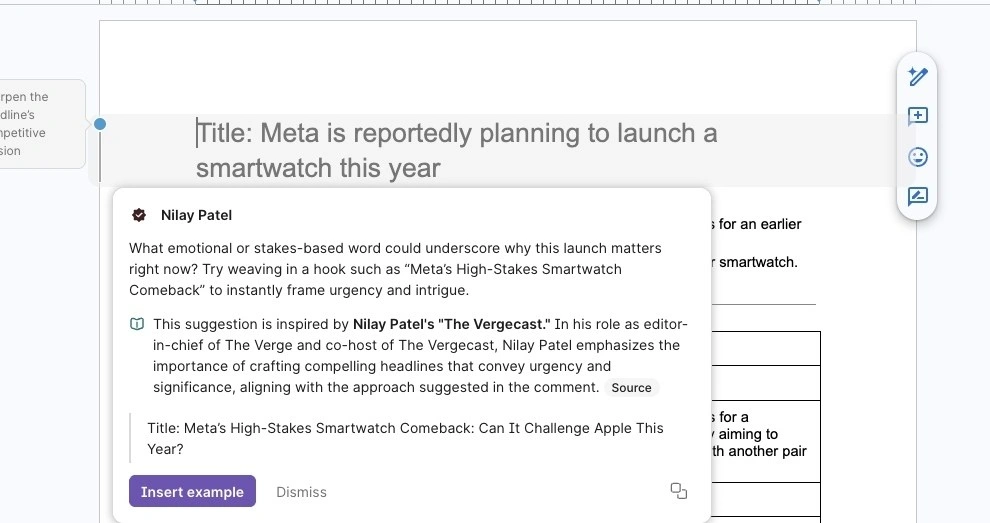

Launched in August, the feature analyzes user writing and surfaces AI-generated suggestions supposedly inspired by subject matter experts. However, numerous tech journalists discovered their names being used without consent. The list of unauthorized identities includes The Verge's Nilay Patel, David Pierce, Sean Hollister, and Tom Warren, alongside renowned figures like Stephen King, Neil deGrasse Tyson, and Carl Sagan. Other prominent journalists from Bloomberg, The New York Times, and Wired, such as Mark Gurman and Jason Schreier, were also integrated into the system with outdated job titles.

Superhuman's Defense and Technical Flaws

Alex Gay, vice president of product and corporate marketing at Superhuman, stated that the Expert Review agent does not claim endorsement from these experts. Instead, he argued that the feature provides suggestions inspired by publicly available and widely cited works. Despite this defense, the implementation has proven highly problematic for users attempting to verify the AI's sources.

The feature frequently crashes and often directs users to spammy copies of legitimate websites or archived pages rather than the original source material. In some instances, the AI links to completely unrelated works not authored by the person it claims to be mimicking. Furthermore, the user interface in Google Docs presents these AI suggestions in a format that closely resembles comments from real users, potentially misleading writers into believing they are receiving genuine expert edits.

The AI also fails to accurately replicate the actual editing styles of the individuals it impersonates. For example, suggestions attributed to Sean Hollister recommended adding unnecessary parenthetical context, directly contradicting his real-world preference for straightforward wording and the removal of repetitive explanations. This discrepancy underscores the limitation of training an AI solely on published writing without understanding the underlying editorial logic.

Frequently Asked Questions

What is the Grammarly Expert Review feature?

It is an AI tool introduced by Superhuman that analyzes text and provides writing suggestions supposedly inspired by famous authors and industry experts.

Did the experts give permission to be used in Grammarly's AI?

No, the journalists and experts named in the feature did not grant permission. Superhuman claims it uses their identities because their published works are publicly available.

Are the AI suggestions accurate to the experts' real editing styles?

Reports indicate the AI often fails to replicate the actual editing preferences of the individuals it mimics and sometimes links to incorrect or spammy sources.

My Take

The controversy surrounding Grammarly's Expert Review exposes a fundamental flaw in the current generative AI playbook: the assumption that public availability equals open access for commercial impersonation. By scraping the published works of journalists like Mark Gurman and Sean Hollister to create simulated editors, Superhuman has crossed the line from utility into unauthorized digital cloning. The fact that the AI fails to actually edit like the real Hollister proves that ingesting published writing does not equate to understanding an editor's internal logic. As AI companies continue to push boundaries, this incident will likely accelerate demands for strict opt-in regulations regarding how personal identities and professional reputations are leveraged in commercial software.