Table of Contents

If you are tired of constantly correcting your AI's code or guiding it through complex multi-step tasks, the newly announced GPT-5.5 is designed to solve exactly that problem. OpenAI has officially launched the latest upgrade to its family of models powering the ChatGPT and Codex applications. This new generation focuses heavily on autonomous execution, promising massive leaps in agentic coding, computer use, and early-stage scientific research.

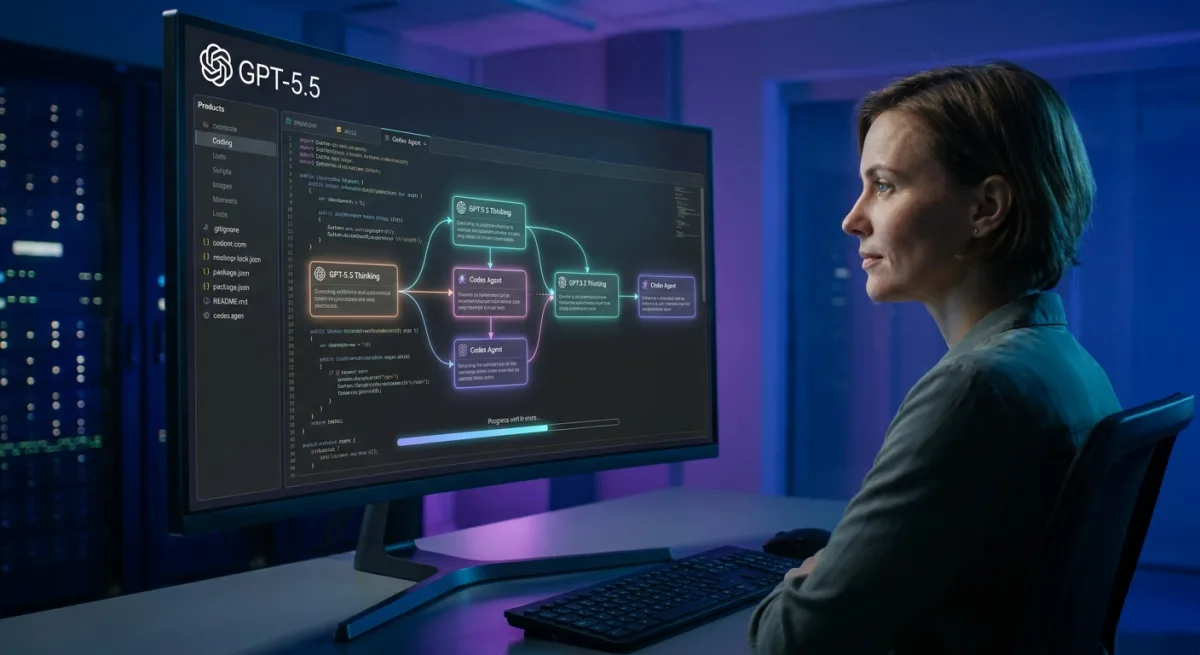

The latest upgrade introduces a more independent AI architecture that requires significantly less human intervention. According to the official announcement, the model can now plan workflows, utilize external tools, and verify its own output automatically. This shift aims to transform the AI from a simple conversational assistant into a capable digital worker.

How to Access the New GPT-5.5 Models

OpenAI is splitting the new capabilities into distinct tiers based on user needs and subscription levels. The rollout targets both everyday power users and enterprise-level researchers. Here is the official breakdown of availability:

- GPT-5.5 Thinking: Designed to offer faster help for harder problems. Available to ChatGPT Plus, Pro, Business, and Enterprise subscribers.

- GPT-5.5 Pro: Pitched as a dedicated research partner for tougher questions where absolute accuracy matters more than speed. Limited exclusively to ChatGPT Pro, Business, and Enterprise tiers.

- Codex Integration: The coding-specific upgrades span across Plus, Pro, Business, Enterprise, Edu, and Go plans.

- API Access: OpenAI states that developer access via the API is coming "very soon."

Token Efficiency and Agentic Coding

Beyond raw intelligence, OpenAI argues that its latest model is significantly more token-efficient. This architectural improvement means that complex Codex tasks should, in theory, finish with less computational overhead despite the bump in capabilities. Developers relying on the platform for heavy coding sessions will likely notice smoother execution and better resource management.

The introduction of agentic coding is particularly notable for software engineers. By allowing the AI to verify its own output and use computer tools independently, developers can offload tedious debugging and multi-step script generation. This allows human engineers to focus on high-level architecture rather than line-by-line syntax correction.

The Shift Toward Autonomous AI Agents

The explicit focus on "less hand-holding" signals a critical pivot in OpenAI's product strategy. We are moving past the era of prompt-heavy chatbots and entering the phase of true AI agents. By granting the model the ability to plan and verify its own work, OpenAI is directly targeting enterprise workflows that demand reliability over simple text generation.

Furthermore, the strategic split between the "Thinking" and "Pro" models highlights a maturing market. OpenAI recognizes that speed is not always the priority; for early-stage scientific research and complex coding, users need a deliberate, highly accurate partner. This tiered approach ensures that casual power users get the snappy performance they expect, while enterprise clients get the rigorous accuracy they are paying a premium for.