Running local AI in 2026 has transformed from a hardware-heavy burden into a seamless, cost-free solution for developers and privacy-conscious users. While cloud giants like ChatGPT, Gemini, and Claude continue to release massive models, the fear that on-device inference would become obsolete has been entirely debunked. Today, running capable language models locally is a production-ready necessity for avoiding exorbitant API costs and protecting sensitive data.

This guide is specifically for developers, researchers, and businesses looking to integrate artificial intelligence without compromising on privacy or budget. By shifting to local models, users can achieve offline operation and zero per-token costs, fundamentally changing how enterprise and personal AI tools are deployed. The Reddit r/LocalLLaMA community has heavily documented this shift, proving that local setups are now the default for many workflows.

The Technical Shift: Why Local Models Survived

A few years ago, running a capable language model locally required serious hardware compromises and complex configurations. Today, that barrier has almost disappeared, allowing models in the 4B to 8B parameter range to handle everyday tasks fluently on a standard laptop. Furthermore, quantized 30B+ models now run surprisingly well on mid-range consumer GPUs.

According to industry analysis, several key technical breakthroughs drove this massive shift in accessibility. These innovations have allowed developers to bypass the massive server farms previously required for high-level inference.

- Mixture-of-Experts (MoE): These architectures reduced computation requirements by over 70% while preserving high-level performance.

- Advanced Quantization: The adoption of 4-bit and 5-bit quantization shrank model sizes significantly without major quality loss.

- Domain-Specialized Models: Smaller, focused models now frequently outperform larger generalist cloud models on specific, targeted tasks.

Local vs. Cloud AI: The 2026 Landscape

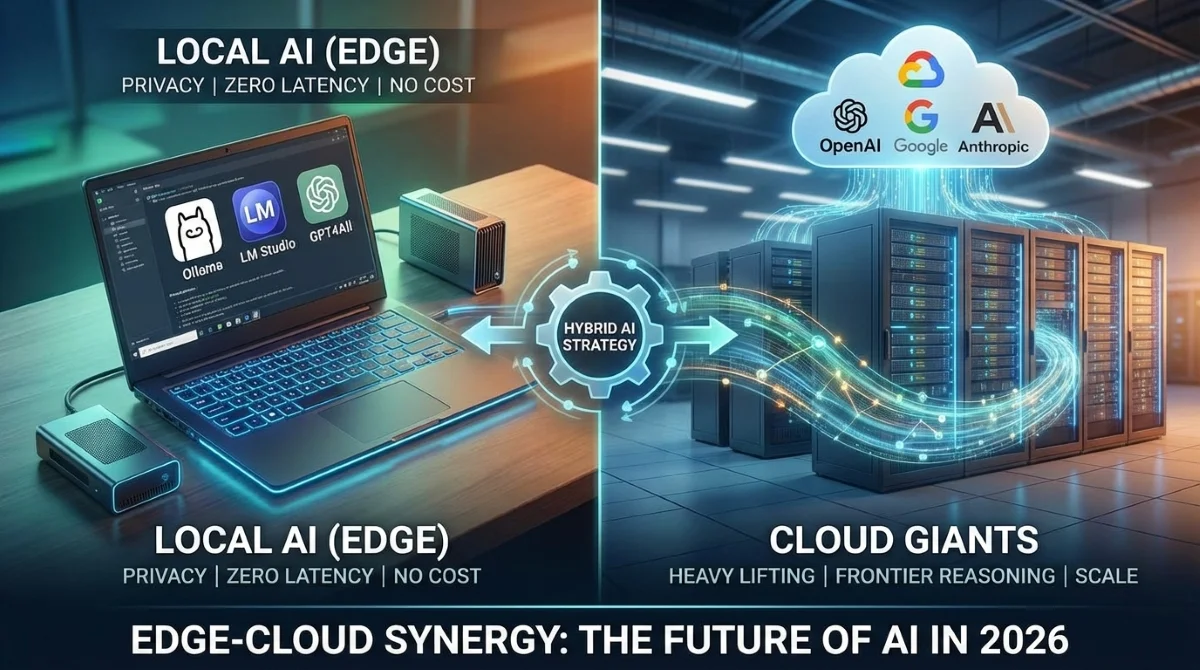

The decision between local and cloud infrastructure is no longer about which is inherently better, but rather which tool fits the specific job. Local models offer concrete advantages that cloud services simply cannot match, particularly regarding data sovereignty. However, as noted in recent comparisons, cloud platforms still dominate when raw computational power is required.

| Feature | Local AI Models | Cloud AI Services |

|---|---|---|

| Data Privacy | 100% private; prompts never leave the device. | Data is processed on external corporate servers. |

| Operating Cost | Zero per-token cost; no monthly subscriptions. | Recurring API fees and usage caps apply. |

| Latency | Ultra-low latency (10 to 50 milliseconds). | Higher latency (100 to 500+ milliseconds). |

| Reasoning Power | Excellent for daily tasks and coding assistance. | Superior for frontier reasoning and complex logic. |

| Hardware Needs | Requires a capable CPU or mid-range GPU. | No local hardware required; scales instantly. |

Top Models and Tools to Try

Setting up a local environment is now a matter of minutes rather than days. Tools like Ollama, LM Studio, and GPT4All have streamlined the installation process, offering intuitive interfaces for managing multiple models. For developers, the community is currently rallying around the Qwen 3 Coder 80B model for advanced programming assistance.

For general use, the Llama 3 variants remain the top choice across the industry. The 70B version of Llama 3 is actively competing directly with GPT-4 on complex reasoning tasks. While a 4B model can largely replace ChatGPT for daily writing and summarizing, deep research still benefits from larger architectures.

My Take: The Hybrid Era is Here

The debate over whether local AI is dead has been definitively answered by the sheer performance metrics we are seeing in 2026. When local inference runs at an ultra-low latency of 10 to 50 milliseconds compared to the 100 to 500 milliseconds required for cloud requests, the user experience on-device is undeniably superior for rapid tasks. This speed advantage, combined with absolute data privacy, makes local models indispensable for enterprise applications.

However, the smartest approach moving forward is a hybrid architecture. As noted in recent architectural discussions, relying solely on one ecosystem limits potential. Users should deploy local models for private, repetitive, or cost-sensitive tasks, while reserving cloud models for heavy reasoning or tasks requiring massive 1M+ token context windows.

Found this useful? Share it with a developer still overpaying for cloud APIs.