Instagram is introducing a critical safety update designed to bridge the gap between teen privacy and parental awareness regarding mental health. Starting next week, the platform will actively notify parents if their child repeatedly searches for terms clearly associated with self-harm or suicide within a short period. This new intervention mechanism is specifically available for Teen accounts that have parental supervision protections enabled, marking a significant shift in how Meta handles sensitive search intent on its platforms.

The rollout is scheduled to begin next week across the United States, the United Kingdom, Australia, and Canada. Meta has confirmed that this feature is not automatic for all users; it requires both the parent and the teen to have opted into the platform's supervision tools. While the immediate focus is on search queries within the Instagram app, Meta has also disclosed plans to implement a similar alert system for its AI chatbots later this year, expanding the safety net to conversational interfaces.

How the Alert System Works

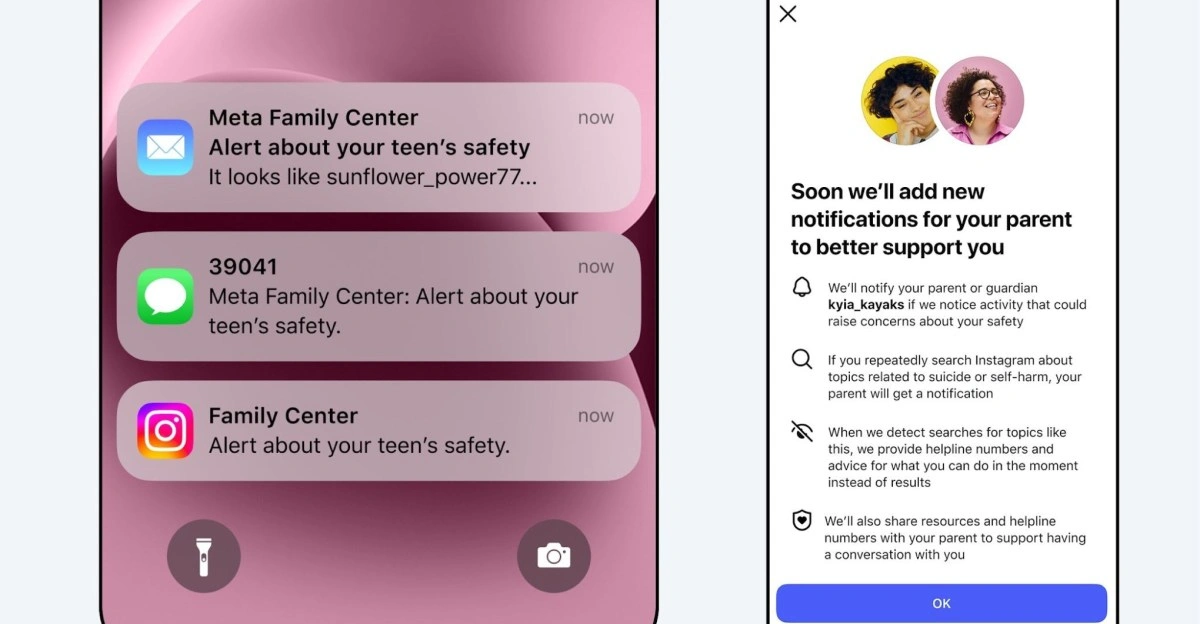

The core functionality of this update revolves around detecting patterns of distress. According to Meta, the system triggers an alert only when a teen "repeatedly tries to search for terms clearly associated with suicide or self-harm within a short period of time." This threshold is designed to differentiate between a fleeting curiosity or accidental search and a potential crisis. When the threshold is met, parents will receive notifications through multiple channels depending on the contact information linked to their account, including email, text messages, or directly via WhatsApp.

In addition to the alert itself, the notification will include guidance and optional resources to help parents approach these sensitive conversations with their children. Instagram emphasized that the goal is to empower parents to step in when support is needed, rather than to police every interaction. The company stated that the vast majority of teens do not search for this content, and when they do, the platform's existing policy is to block the results and redirect the user to helplines. This new layer adds a human elementthe parentto the safety loop.

Rollout Details and Availability

| Feature Aspect | Details |

|---|---|

| Launch Date | Starting Next Week (Early March 2026) |

| Supported Regions | US, UK, Australia, Canada |

| Requirement | Active Parental Supervision (Opt-in) |

| Notification Channels | Email, Text Message, WhatsApp, In-app |

Meta aims to avoid "alert fatigue" by ensuring these notifications are not sent unnecessarily. The company noted that sending too many alerts could make them less useful overall, so the algorithm is tuned to identify specific, repeated behaviors that suggest a genuine need for intervention. While currently limited to four major English-speaking regions, the feature is expected to expand to other territories later in 2026.

Frequently Asked Questions

Will all parents receive these alerts automatically?

No. The alerts are only sent if the teen's account is connected to a parent's account via Instagram's supervision tools. Both parties must opt in to this feature.

Does this feature show parents exactly what the teen searched for?

The source indicates that parents are alerted to check on their teen due to searches related to self-harm, but it emphasizes providing resources for discussion rather than a raw log of search terms to preserve some level of autonomy while ensuring safety.

My Take

This update represents a necessary evolution in digital parenting tools. For years, platforms have relied on algorithmic blocking, which often fails to address the root cause of a user's distress. By bringing parents into the loop via direct channels like WhatsApp, Meta is acknowledging that technology alone cannot solve mental health crises. However, the effectiveness of this tool hinges entirely on adoption; since it requires an opt-in for supervision, the most vulnerable teensthose without supportive parental structuresmay remain outside this safety net. The upcoming integration with AI chatbots is particularly promising, as conversational AI presents a new frontier where mental health risks must be proactively managed.