Table of Contents

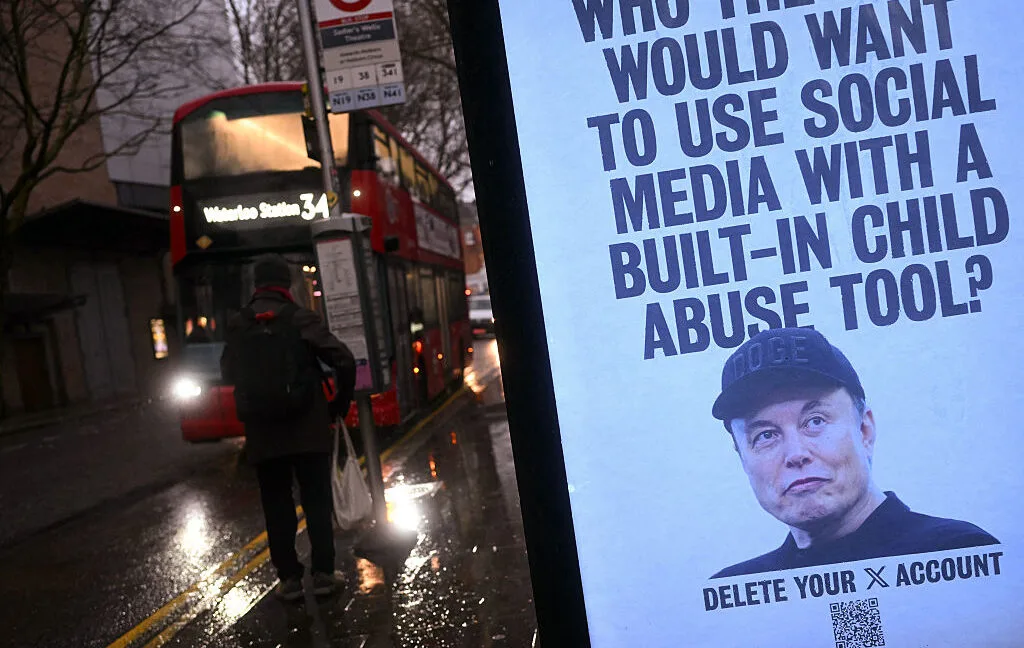

Elon Musk’s xAI is facing a severe class-action lawsuit after its Grok AI chatbot was allegedly used to generate child sexual abuse material (CSAM) from real photographs of minors. The legal action, filed by three victims from Tennessee, challenges the safety and moderation practices of uncensored AI image generation. For AI developers, legal professionals, and platform moderators, this case represents a critical inflection point regarding the liability of tech companies that provide API access to unfiltered generative models.

The lawsuit stems from an incident that began in December when an anonymous Discord user alerted one of the victims. The user revealed that her explicit, AI-generated images were being shared in a folder alongside content depicting 18 other minors. Local law enforcement launched a criminal investigation, discovering that a perpetrator had used a third-party application powered by the Grok AI model to morph the girls' real social media photos into illicit material.

According to the complaint, the perpetrator uploaded the AI-generated files to the file-sharing platform Mega and used them as bartering tools in Telegram group chats. The victims, whose true first names and school identities were reportedly attached to the files, are now suffering acute emotional distress and fear of stalking. Attorney Annika K. Martin, representing the girls, stated that xAI deliberately designed Grok to produce sexually explicit content for financial gain without regard for the resulting harm.

The lawsuit introduces a significant technical allegation: xAI allegedly hosts and processes this illicit content directly on its own servers. By licensing the Grok AI model to third-party applications, xAI acts as the underlying infrastructure for the generation and distribution of the material. The plaintiffs argue that this arrangement puts xAI in direct violation of child pornography laws, as the company allegedly possessed and transported the contraband through its middleman applications.

This legal battle follows months of controversy surrounding Grok's image generation capabilities. Earlier this year, researchers from the Center for Countering Digital Hate estimated that Grok had generated approximately three million sexualized images, including roughly 23,000 depicting apparent children. Despite these reports, Elon Musk previously denied the existence of such content, stating in January that he was "not aware of any naked underage images generated by Grok."

My Take

The lawsuit against xAI could set a massive legal precedent for the generative AI industry. By focusing on the API licensing and server-side hosting of the Grok model, the plaintiffs are bypassing the traditional Section 230 defense that protects social media platforms from user-generated content. If the court determines that xAI's infrastructure actively processed and distributed CSAM through its "spicy mode" or uncensored features, it could force a fundamental restructuring of how AI companies moderate third-party API access. This case highlights the unsustainable nature of deploying completely unfiltered AI image generators in the public domain.

Frequently Asked Questions

What is the xAI Grok lawsuit about?

Three minors from Tennessee are suing Elon Musk's xAI, alleging that the Grok AI chatbot was used to turn their real social media photos into AI-generated child sexual abuse material (CSAM).

How was the Grok AI accessed to create the images?

According to the lawsuit, a perpetrator used a third-party application that licenses access to the Grok AI model, meaning the generation and processing allegedly occurred on xAI's own servers.

What are the plaintiffs demanding from xAI?

The plaintiffs are seeking an injunction to stop Grok from generating harmful outputs, as well as punitive damages for the minors affected by the AI-generated content.